Sketches of Disruptive Continuity in the Age of Print from Johannes Gutenberg to Steve Jobs

Disruptive continuity and the history of printing

To return to Alois Senefelder and Ira W. Rubel, it can be stated that these inventors were responsible for two critical and necessary stages in the development of print technology. In the first case, Senefelder was responsible for the transition from the relief-mechanical to the planographic-chemical era of printing. This means that the process developed by Senefelder no longer relied upon the mechanical impression of ink from raised letterforms into the paper but upon the chemically separative properties of oil and water on an otherwise flat surface of lithographic stone for transferring a printing image to paper.

In the second case, Rubel was responsible for the transition from the direct image transfer to the indirect image transfer stage of printing press technology. This means that Rubel redesigned the printing press to include an additional rubber cylinder mechanism that stood between the inked planographic surface from which it accepted the printing image and transferred it to the paper with the assistance of an impression cylinder. Together, these two advancements established offset lithography, the high-speed, mass manufacturing method that overtook letterpress printing and became dominant internationally, especially in the second half of the twentieth century.

These two transformations first manifested themselves in the form accidental events: Senefelder’s chance writing of a laundry list upon a limestone with a crayon and Rubel’s paper misfeed on a lithographic rotary printing press with a rubber blanket on its impression cylinder. As discussed above, that the breakthrough to offset lithography is associated with accidents is entirely consistent with the manner in which human technical progress takes place. The combination of scientific and socio-economic changes that occurred in the eighteenth and nineteenth centuries made necessary a departure of print technology away from the letterpress printing processes and into entirely new planographic, chemical and indirect printing processes of the modern industrial era. While the two innovators were working to develop printing methods in their respective lifetimes, the necessary departure from the system associated with Johannes Gutenberg—preconditioned by more than three centuries of societal change—appeared initially as chance events to both Senefelder and Rubel.

If these two individual inventors had not made these breakthroughs, would offset lithography have been invented by others; would this transformation still have happened sooner or later? Had Senefelder been content to become a playwright and Rubel stayed in the paper making business, would the logic of industrial society and the need for a high-speed and mass manufacturing method of print communications in the twentieth century still have resulted in offset lithography? When looking at Senefelder and Rubel within a broader historical context, an affirmative answer must be given to these counterfactual questions.

The role and interaction of the macro socio-economic (objective) and the micro individual inventor (subjective) driving forces of advancement are accounted for within the theoretical framework of disruptive continuity. Beginning with the societal context, the theory first establishes that there are powerful evolutionary trends in human cultural that bind events together in a historical progression, from lower to higher and from simple to more complex forms. This progression of technology is intimately bound up with the forms of social organization and intellectual development of mankind. This is not to say that there have never been reversals of progress or that every technological innovation is a guaranteed contribution to further advancement. As is the case with biological evolution, where some mutations produce traits that are harmful to the survival of a species, some technical innovations have, under certain conditions, represented a step backward or a diversion along a dead-end path.

There is also the matter of technical advancements becoming the means through which society can be destroyed as, for example, would happen in the event of a nuclear world war or in earlier societies that collapsed from the loss of soil fertility due to crop growing and farming practices. This danger arises from a conflict between the increasing sophistication and power of human innovation with the inability of the cultural level and social and political organization of civilization to adequately accommodate the tool-making advancements. However, despite these periodic setbacks and existential threats, the general dynamic of development is one of an evermore complex and greater technical subordination of the properties and laws of nature to the needs of man and an expanding separation of humanity from the blind operation of forces both within the natural environment and society itself, which is also in the end a product of nature.

The topic of the continuous versus the discontinuous view and the role of the individual inventor in the development of man-made implements and artifacts has been the subject of study by historians. George Basalla, a historian of science at the University of Delaware, deals with this question in his book, The Evolution of Technology. Basalla likens the history of technology to biological evolution and correctly objects to those who advocate the view that “inventions are the products of superior persons who owe little or nothing to the past.” He also disputes the position of those who support a theory of innovative discontinuity from what he calls the “more sophisticated formulations” that “scientific revolutions” have rendered technical innovation into discrete phases, each with no relationship to the prior accomplishments.

Basalla says that technology should not be seen in a linear relationship with science and placed in a “subordinate position” to the latter and with the former “erroneously defined as the application of scientific theory to the solution of practical problems.” He writes, “technology is not the servant of science” because it existed long before science and “people continued to produce technical triumphs that did not draw upon theoretical knowledge.” However, Basalla goes on to bend the stick back too far in the opposite direction, writing:

The artifact—not scientific knowledge, not the technical community, not social and economic factors—is central to technology and technological change. Although science and technology both involve cognitive processes, their end results are not the same. The final product of innovative scientific activity is most likely a written statement, the scientific paper, announcing an experimental finding or a new theoretical position. By contrast, the final product of innovative technological activity is typically an addition to the made world: a stone hammer, a clock, an electric motor.

Instead of recognizing that the growth of knowledge and theoretical science developing in tandem with technical advancements, Basalla inverts the false demotion of the product of innovation and says that the artifact stands head and shoulders above science. He finishes by referring to the historian Brooke Hindle who he says argued that “the artifact in technology” is superior to any “intellectual or social pursuits” because it is a “product of the human intellect and imagination and, as with any work of art, can never be adequately replaced by a verbal description.”

These are indeed peculiar ideas being advanced by someone who is opposing the standpoint of the discontinuous theory of technology evolution. While Basalla insists upon the theory of continuity, his presentation becomes entirely one-sided. The dichotomy between science and technology, between the individual artifact and the social environment, between discontinuity and continuity in human innovation and between the practical and theoretical sides of invention are all conceived of as fixed categories that never touch each other, are incapable of interpenetrating and cannot be understood in thought as existing simultaneously. In Basalla’s concept of technical innovation there are no breaks or disjunctions in human progress. Instead technical history moves along ever so smoothly, morphing from one object to the next without any abrupt dislocations or transformations.

The preoccupation with continuity to the absolute exclusion of discontinuity and singular focus on the primacy of the individual artifact means that one cannot account for the living relationship between disruptive advancements in society and how they drive revolutionary leaps in technology. In the search to find evidence of antecedent developments for major artifacts in human history—such as stone tools, the cotton gin, steam power, the electric motor, the light bulb, etc.—Basalla is forced to suppress the really existing discontinuity that occurs in the progression from one innovation to the next. In the end, however, he betrays his initial statement against the role of the “superior persons who owe little or nothing to the past” by embracing the idea that the “human intellect and imagination” and “work of art” are the penultimate expression of technical progress.

Even if it were possible to trace every object of human ingenuity backward in time, there would be a point at which one would arrive at the first man-made thing ever created. Then, like the chicken or egg dilemma, how does the notion that every artifact has a precursor hold up? Outside of a recognition that there was a discontinuous vault in man’s earliest development—a phenomenon that has been repeated throughout history—the first product of man would never have been created and technical progress would never have gotten started. Thus, the theory of a purely continuous evolutionary development of technology does not stand up to scrutiny.

Disruptive continuity, on the other hand, is a theory within which it is possible to understand both the interconnection of every new invention with the past as well as its separation and elevation above the limits of prior artifacts, driven by the increasingly complex accumulation of man’s productive capacities, social organization and intellectual accomplishments. Given the interrelationship of human communications—and especially print communications—with the practical, technical, intellectual and scientific development of society, there is perhaps no better example of the validity of the theory of disruptive continuity.

All forms of human communication have existed as both byproducts of and engines for the advancement of social existence from the earliest primitive civilization to the modern information age. During the early Stone Age, when speech and language—the first form of human communication—emerged somewhere between 1.75 and 3 million years ago, prehistoric man in the Lower Paleolithic was crafting Acheulean stone axes, coordinating hunting and gathering and engaging in other complex social practices that depended upon verbal communications. While these primitive peoples had speech, they had no repository for recording their thoughts or words. As Harvey Levenson of California Polytechnic State University explains in Understanding Graphic Communications:

Early peoples left little information about themselves, as they had no way to transform spoken words into written languages, to pass on their heritage to future generations. Their history and their wisdom died with them. It was not until fairly recently in human history that people figured out ways to record simple events and ideas through pictures and symbols.

The earliest forms of visual communication, such as the cave paintings at Lascaux in southern France, were created by anatomically modern humans somewhere between 17,000 and 35,000 years ago during the Upper Paleolithic (late stone age). By this point, humans had migrated to all inhabitable areas of the earth and these primitive tribal peoples were engaged in what is known as “behavioral modernity.” The use of pictorial communication proceeded along with abstract thinking, planning depth, fishing, burial practices, ornamentation, music, dance and other cultural activities connected with more advanced blade technology and tool making practices.

Although we do not know precisely the meaning of the pictures at Lascaux Cave, it is clear that the nearly 6,000 painted figures are representations of the animals—including bulls, equines, stags and a bear—that existed in Europe during that era. The paintings also include representations of humans and other abstract symbols indicating a high degree of self-awareness and ingenuity. Significantly, the people who made the cave paintings used scaffolding to reach the ceilings and created the colors of red, yellow, and black from a wide range of mineral pigments including compounds such as iron oxide and substances containing manganese. In some instances, the color is thought to have been applied by suspending the pigment in either animal fat or clay as a primitive paint. The colors were swabbed or blotted on, and it appears that the pigment was applied by blowing a mixture through a tube. All of these facts help to build an understanding of the manner in which the technical level of society corresponds with the development of increasingly complex human communications from strictly verbal to visual and pictorial forms.

There are no records of who the individuals were that uttered the very first words of speech or the first sentence containing a noun and a verb or who painted the first picture on a cave wall or who developed the first paint used in cave art. While the location of these occurrences and the anonymous people involved came about in a seemingly random fashion, there is no doubt that these advancements were conditioned by objective material circumstances and became necessary steps in the development of human culture. They were both a contribution to and the consequence of the social and technical environment of the early and late Stone Ages.

Similar connections can be deduced from the stamp seals used to identify ownership in Mesopotamia in 4500 BC—the earliest forms of writing called cuneiform—that employed pictographs to communicate syllables and sounds by Sumerian scribes and were pressed into clay with a wedge-tipped stylus in 3300 BC. These relationships can also be seen in the hieroglyphics written on papyrus, a precursor to paper, developed in Egypt in 3200 BC. All of these advancements in graphic communications—developed in an apparent unplanned and haphazard manner—are rooted in the socio-economics of ancient civilization, especially the expansion of commerce and the need for record keeping. These events represent critical chapters in the prehistory of print communications.

In examining the history of printing, it is rare to find surveys that trace the interactions between the evolution of the broader technical foundations of society—punctuated by the unpredictable randomness of inventors and the means through which they arrived at their inventions—with the transitions in the means and methods of print communications. This problem was highlighted by Elizabeth L. Eisenstein in her 1979 volume, The Printing Press as the Agent of Change. Eisenstein explains that the “far reaching effects” of Gutenberg’s invention—which “left no field of human enterprise untouched” and whose consequences are “of major historical significance”—have so far seen very little elaboration in the major texts on the history of print technology. Eisenstein points out that historians may refer to printing as one of the most important inventions, if not the most important invention, of the second millennia, but the dynamic interrelationship of Gutenberg’s breakthrough—as well as subsequent advancements—with the broader processes of human progress are rarely explored:

Insofar as flesh-and-blood historians who turn out articles and books actually bear witness to what happened in the past, the effect on society of the development of printing, far from appearing cataclysmic, is remarkably inconspicuous. Many studies of developments during the last five centuries say nothing about it at all.

Those who do touch on the topic usually agree that the use of the invention had far-reaching effects. Francis Bacon’s aphorism suggesting that it changed “the appearance of and state of the whole world” is cited repeatedly and with approbation. But although many scholars concur with Bacon’s opinion, very few have tried to follow his advice and “take note of the force, effect and consequences” of Gutenberg’s invention. Many efforts have been made to define just what Gutenberg did “invent,” to describe how moveable type was first utilized and how the use of the new presses spread. But almost no studies devoted to the consequences that ensued once printers had begun to ply their new trades throughout Europe. Explicit theories as to what these consequences were have not yet been proposed, let alone tested or contested.

The lack of research that Eisenstein characterizes in her book as “The Unacknowledged Revolution,” is due in part to a frequent presentation of print technology history in a linear manner, going from one process to another—or from one innovator to another—in isolation from an analysis or understanding of the broader socio-economic context and the real driving forces of change before and since 1440 .

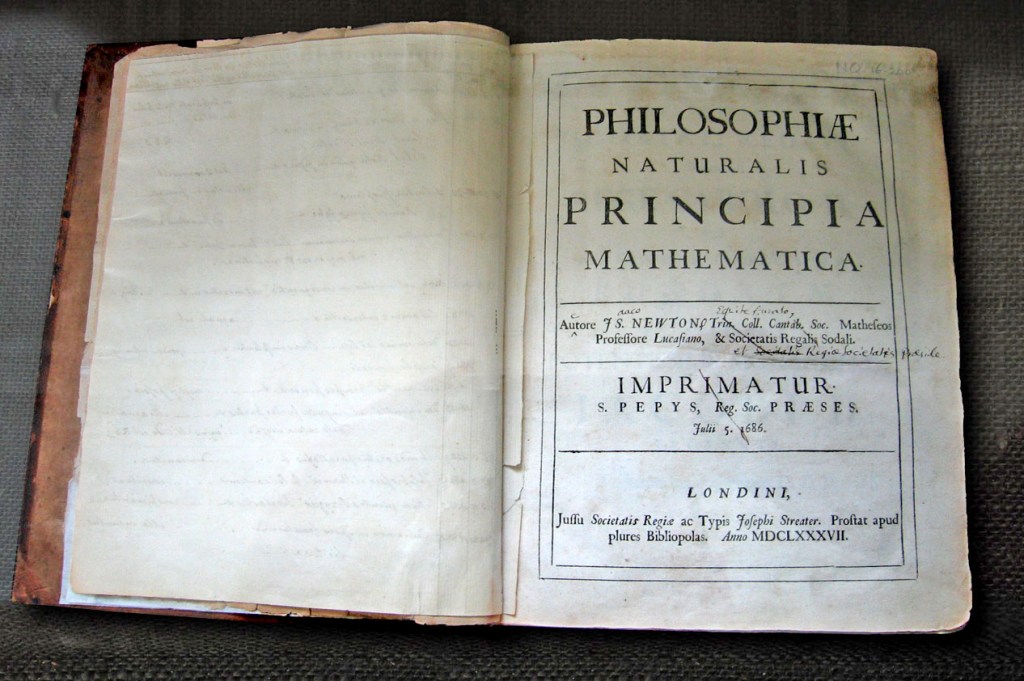

It is now well established that the printing was first developed in China, where paper was invented under the Han Dynasty by Ts’ai Lun at the beginning of the second century. The first woodblock printing known as xylography—where a complete page of relief characters was carved in a piece of wood—was produced in China in the sixth century. It is also known that moveable type forms made of clay, ceramic, wood and metal were also developed first in China between the eleventh and thirteenth centuries. Some of these methods spread across East Asia, including Korea and Japan, and into the western regions of Central Asia. It is speculated that the Asian relief printing techniques made their way into Europe where they were developed further in the form of the handheld metal casting mold and mechanical printing press invented by Johannes Gutenberg in the fifteenth century. It is known that Gutenberg’s typography methods were reintroduced to China in the nineteenth century.

The reason that the birth of printing has become associated with Gutenberg and his invention in Mainz, Germany in 1440—and not in China at least three hundred years earlier—is that the entire mechanized production system created by the fifteenth century inventor was vastly more productive than the hand carving xylographic techniques from the East. Due to a series of other foundational events taking place at that time, Gutenberg’s methods were picked up by a cultural transformation underway in Europe and swept very rapidly from one country to another. Meanwhile, the movable type techniques first pioneered in Asia did not overtake woodblock printing the way it did in the West. This was at least partially due to the fact that Chinese interchangeable type production required the management of as many a forty-thousand different characters. While xylography existed in Europe and was carried over into Gutenberg’s time for illumination of printed texts from the earlier form of handwritten manuscripts, the methods he developed represented a paradigm shift away from all previous techniques up to that point in world history. Eventually, woodblock illustrations were replaced by other methods of pictorial reproduction that were derivative and complimentary to the relief process and printing press mechanism of the letterpress era.

Perhaps the best description of the reciprocal impact of Gutenberg’s invention is provided by Will Durant in the sixth volume of The Story of Civilization—The Reformation:

Soon half the European population was reading as never before, and a passion for books became one of the effervescent ingredients of the Reformation age. … The typographical revolution was on.

To describe all its effects would be to chronicle half the history of the modern mind. … Printing replaced esoteric manuscripts with inexpensive texts rapidly multiplied, in copes more exact and legible then before, and so uniform that scholars in diverse countries could work with one another by references to specific pages of specific editions. … Printing published—i.e., made available to the public—cheap manuals of instruction in religion, literature, history and science; it became the greatest and cheapest of all universities, open to all. It did not produce the Renaissance, but it paved the way for the Enlightenment, for the American and French revolutions, for democracy. It made the Bible a common possession, and prepared the people for Luther’s appeal from the popes to the Gospels; later it would permit the rationalist’s appeal from the Gospels to reason. It ended the clerical monopoly of learning, the priestly control of education. It encouraged vernacular literatures, for the large audience it required could not be reached through Latin. It facilitated the international communication and cooperation of scientists. It affected the quality and character of literature by subjecting authors to the purse and taste of the middle classes rather than to aristocratic or ecclesiastical patrons. And, after speech, it provided a readier instrument for the dissemination of nonsense than the world has ever known until our time.

The above timeline illustrates the interrelationship between the milestones in printing innovation between the fifteenth and beginning of the twenty-first centuries and the progression of the foundational eras of society—technological, socio-economic and cultural—along with major historical events in history, science and communications. This visual presentation establishes a framework within which to understand the unfolding process of disruptive continuity in the age of print communications.

The birth of printing was both the product of the increasing volume of hand copying by the scribes and the development of handicraft methods and metallurgy associated with the transition from the Dark Ages to the great cultural awakening of the Renaissance. It was a consequence of and a catalyst for the flowering of art, architecture, philosophy, literature, music, politics and other aspects of culture that were driven by the broad progression of societal change that brought about the discovery of the New World, the circumnavigation of the globe and the opening of trade routes from Europe through Asia and America. The printing press and the associated circulation of printed books and other materials to ever wider layers of the population was instrumental in great social movements such as that of the Protestant Reformation sparked by Martin Luther’s critique of the indulgences of the Catholic church. Behind these changes, came the Enlightenment, the development of democratic government, the domination of the commodity economy and the suppression of the institutions of feudalism during the great revolutions in America and France at the end of the eighteenth century. One need only point to the significance of the publication of Thomas Paine’s, The Age of Reason, to illustrate the fundamental role played by print communications in the intellectual ferment that accompanied the transition from feudal monarchy to democratic capitalism.

The systems of the printing press more or less existed as they had been developed by Gutenberg—based upon fruit presses designed for making cider, wine and oils—for three and a half centuries before any significant design changes were made. The technology developed very slowly as in an incubator with a few modifications, surrounded by the steady development of the Renaissance until the rise of the Scientific Revolution and the coming of the first industrial revolution. Then, all of a sudden, new materials and mechanical techniques exploded the old the wooden platen machine from Gutenberg’s era and, by the beginning of the nineteenth century, the printing press evolved rapidly to a completely iron apparatus with levers replacing the physical strength of pressmen needed to operate the old screw mechanism to transfer ink to paper. The invention of the paper-making machine in a Paris suburb in the midst of the French Revolution contributed to this evolution and fed the consequent expansion of printed materials with more consistent substrates and improved quality.

At the same, the press technologies that made contact with the paper evolved first into a metal cylinder-to-platen hybrid and, then by the middle of the 1800s, to a fully cylinder-based rotary press. Production speeds increased dramatically along with the volume of printed material that was needed to support the emergence of industrial society in countries around the world. This transition point in the history of print technology is illustrated in the timeline by the separation between the left side (1400 to 1735) and the right side of the graphic (1735 to 2020) around the middle of the eighteenth century. It was at this point of transition from the Renaissance to the Enlightenment, from feudalism to capitalism, from pre-industrial to industrial society that printing underwent a transformation from handcraft to manufacturing such that the equipment, procedures and roles played by the people in the process no longer resembled anything in Gutenberg’s print shop in Mainz, Germany save the letterpress method that transferred the ink onto the paper.

The introduction of steam power during the second industrial revolution brought mass manufacturing methods to printing with multilevel machines in large printing factories with teams of workers operating them. In the second half of the nineteenth century, the web press was introduced and the quantity of printing establishments in major cities, along with the number of daily newspapers, increased exponentially. The industrial expansion—especially in the US in the lead up to, during and after the American Civil War—made fundamental improvements to the production of letterpress typography with the Linotype machine. The invention of halftone photographic reproduction, the perfection of color printing and the proliferation of type styles were also driven by the needs of business advertising and the growth of newspapers and magazines to accommodate the thirst of the expanding urban populations for news and information.

Although electrification had been underway since the 1880s, the transition away from steam power did not take hold in factories and pressrooms until the second decade of the twentieth century. The military needs of both World War I and World War II drove significant developments in communications technologies and electronics were introduced into print machinery and brought with them methods of automation and remote controls. The invention of the transistor and the subsequent revolution in digital technologies—first the integrated circuit and then microprocessors—transformed press controls as levers and knobs were replaced with PLCs (programmable logic controllers).

With the rise of the personal computer in the early 1980s—which enabled the transformative impact of desktop publishing—the entire printing process was revolutionized again with all of the steps from the publisher to the pressroom replaced with software, lasers and direct-to-plate imaging systems. With the invention of digital printing in the 1990s, the computer transformation entered the pressroom and the distinction between office equipment and industrial printing machinery became blurred. The growth and predominance of the Internet and wireless broadband connectivity in the early twenty-first century enabled the integration of printing with online and web-to-print technologies.

A similar analysis can be made of the development of the methodologies for imprinting an image onto a sheet of paper or other substrate over the past six centuries. The generally recognized printing methods—letterpress, intaglio or gravure, lithography including offset lithography, screen printing, flexography and xerography—correspond to different stages of socio-economic development from craft manufacturing to industrial production including the application of metal, rubber and other synthetic materials that rely upon advanced chemistry, photomechanical and electronic processes. The mechanical systems of letterpress (relief printing) and intaglio (recessed printing) are by far the longest surviving methods, with each lasting for more than five centuries well into the twentieth century. Although they continue to be used today for specialized printing purposes, they were essentially displaced by offset lithography by the 1950s. The other three methods—screen printing and flexography at the end of the nineteenth century and xerography in the middle of the twentieth century—were associated with the photomechanical and modern electronic processes including the expansion of printing into areas such as the corporate and legal office, merchandising, retailing and direct mail communications.

Inkjet, the most recently developed method of placing a printed image onto a sheet of paper, requires no image carrier or any intermediary transfer mechanism at all. Inkjet is unique and heralds a new era of print technology because it places an image onto paper directly with an array of nozzles or spray heads. The implications of this transition for the future of print are significant, as it is a distinct departure from the static image reproduction of all previous methodologies—with the exception of xerography—and brings an infinitely variable image to nearly any surface. The integration of this digital process with big data repositories means that the mass manufacturing of any item can be imprinted with messaging that are relevant to small groups or subgroups of people or even a single individual.

As for the history of typographic design—a subject that is covered in several chapters of this book—it can be established that the style changes over the centuries represent a complex interaction of both cultural and technical influences within the forms of print communications. Clearly, the first blackletter fonts designed by Gutenberg and his contemporaries for book production during the incunabula (approximately 1450-1500) reflected the influence of the handwriting of the scribes and the limitations of matrix production within the new metal mold manufacturing technique. Connected with the artisanry of the individual printing establishment, typography underwent creative changes—roman and italic types and upper and lower case characters being designed in the later decades of Gutenberg’s lifetime—and then, after the global expansion of printing, the slow progression of the press was expressed in the emergence of Garamond in France, the eruption of a multiplicity of typeface styles and font families during the industrial era both expressed the various commercial and mass communication needs for signage, advertising and the column inches of newspapers. The type design variety and volume of printed material exploited the speed and precision of the Linotype machine and pantographic punch-cutting engraver.

With the introduction of photomechanical methods and offset lithography in the twentieth century, typography underwent another transformation that corresponded with the esthetics of modernism. While serif type styles still predominated in text-intensive book and publication design, san serif types— such as Helvetica, Univers and Avant Garde—proliferated after World War II and dominated corporate identity, display type and public information signage wherever the Latin alphabet and the English language were the international standard, which by this point was most of the world.

From this review of graphic communications history, it can be seen that there is a logical evolution of stages in the development of printing press machinery, methods of printing, image transfer processes, phases of type production and the pictorial reproduction techniques. These have all proceeded along this path through individual inventors but also independently of them. That is to say, technological advancement in graphic communications has moved through periods of human history and individual innovators were “found” to make the breakthroughs that were necessary at any given moment along this continuum. The specific identity of the innovators and how they were selected is the result of a complex set of circumstances that located the right person at the right time.

This is also not to say that the determined vision of the inventors or the specific knowledge, skills and talents they possessed did not play a role in this selection process. These subjective factors are extremely important and can, in certain situations, be the final decisive element in the achievement. However, in the end, these individual traits are themselves part of a concatenation of conditions such as geographic location, social and economic environment, professional relationships and the availability of resources that form the foundational background and driving forces of innovation.

Senefelder made a highly significant comment in his autobiographical account when he explained that had he possessed the financial resources to invest in “types, a press and paper,” then lithography “probably would not have been invented so soon.” This was his way of saying that the invention of a purely chemical printing method was historically inevitable and may well have been made by someone else, had it not been for the random circumstance of his inability to purchase the resources required to start a letterpress printing business.

Senefelder understood that it was a matter of time before the discovery of lithography would have happened anyway. After all, his invention coincided with major advancements in chemistry in the late eighteenth century. Known as the “chemical revolution,” the elements of water and air molecules were being defined and atomic theory was being suggested while Senefelder was experimenting with the interaction of various chemicals and printing plate surfaces with the use of an oil-based writing tool.

It is interesting to note that, while popular accounts of Senefelder’s invention of lithography often make mention of the “grease pencil” or a “greasy crayon” he used to write the laundry list upon the limestone, there is hardly a reference to the nature of this instrument or the substances out of which it was made. Readers of the stories of the accidental invention of lithography by Senefelder will not find an explanation of the significance his possession of a crayon at the same time that he had a slab of limestone, even though he mentioned his use of this writing tool throughout his biographical account.

Senefelder described the various compositions of the crayon that he experimented with for the purpose of applying it “on the stone plate in dry form like Spanish or Parisian chalk.” He gave eight different combinations of wax, soap, lampblack, spermaceti (whale oil), shellac and tallow from which he created the prepress writing instrument. He wrote that, “Wax, mennig, and lampblack are heated and constantly stirred till the mennig dissolves in froth and changes from red to brown. Then the lampblack is rubbed in thoroughly, the whole warmed again properly and shaped into sticks.” This is one of the earliest descriptions of crayon use for artistic purposes and, to some extent, it can be said that Senefelder is both the inventor of lithography and the crayon.

In a similar manner, while there are repeated references to the misfeed jam of Rubel’s lithographic press that had a “rubber blanket” on its impression cylinder, little time has been devoted to a study of how it came to be that his press contained such a blanket system. The rubber on Rubel’s press was most certainly of the natural and vulcanized type that had been developed and perfected by Charles Goodyear (US) and Thomas Hancock (UK) by the middle of the previous century. While products such as printing cylinder blankets were derived from rubber trees grown in tropical and subtropical regions of South America, western Africa and Southeast Asia, the material had a tendency to swell and blister under the pressure and heat of the printing process. Synthetic rubbers, especially heat resistant neoprene, were invented in the 1930s and solved many of these issues. Without the circumstances that brought rubber blankets to lithographic press machinery, and the subsequent improvement and perfection of these blankets with synthetic materials that took place before and during World War II, the offset method would not have been invented at the turn of the century and the rise of offset lithography would have been delayed.