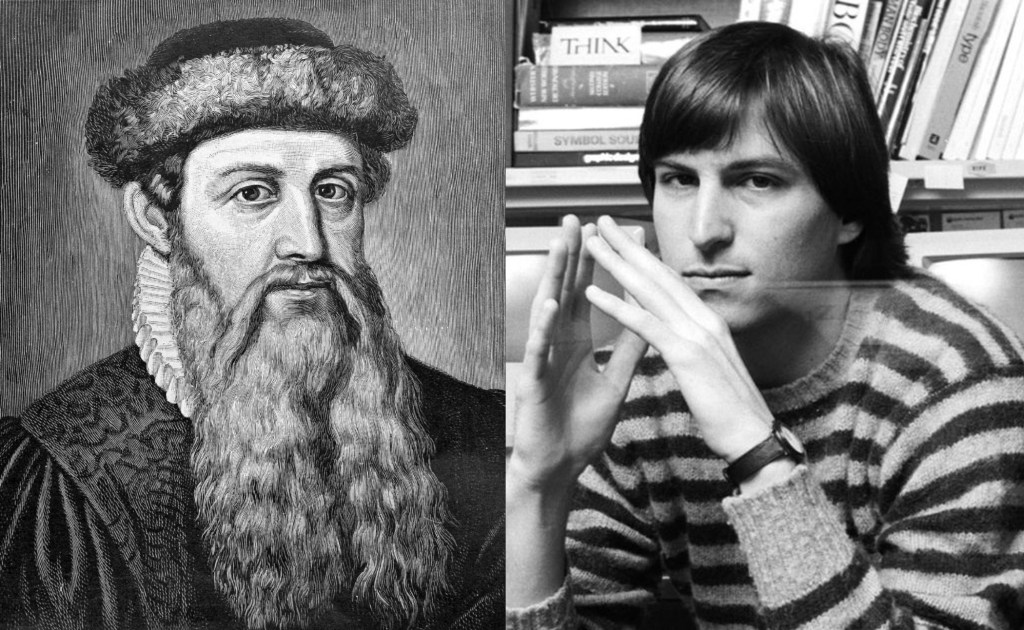

Sketches of Disruptive Continuity in the Age of Print from Johannes Gutenberg to Steve Jobs

Johannes Gutenberg and Steve Jobs

While reviewing nearly six centuries of print technology—through the lives and inventions of significant industry innovators—it became clear that the invention of printing by Johannes Gutenberg on the one side, and the breakthrough of desktop publishing by Steve Jobs on the other, are bookends in the age of print. While it has long been acknowledged that the hand-held type mold and printing press are the alpha in the age of manufacturing production of ink-on-paper forms such as books, newspapers, magazines, etc., the view that desktop publishing is the omega of this age is not necessarily widely held. When viewed within the framework of disruptive continuity, it can be shown that the innovations of Gutenberg and Jobs manifest similar attributes in terms of their dramatic departure from previous methods as well as their connection to the multilayered processes of cultural changes in the whole of society in the fifteenth and twentieth centuries.

The advent in 1985 of desktop publishing—a term coined by the founder of Aldus PageMaker, Paul Brainerd—is associated with Steven P. Jobs because he contributed to its conceptualization, he articulated its historical significance, and he was the innovator who made it into a reality. With the support of publishing industry consultant John Seybold, Jobs went on to integrate the technologies and brought together the people that represented the elements of desktop publishing: a personal computer (Apple Macintosh), page layout software (PageMaker), page description language (Adobe PostScript) and a digital laser printing engine (Canon LBP-CX). He demonstrated the integration of these technologies to the world for the first time at the Apple Computer annual stockholders meeting on January 23, 1985, in Cupertino, California, a truly historic moment in the development of graphic communications.

It is a fact that the basic components of desktop publishing had already been developed in the laboratory at the Xerox Palo Alto Research Center (PARC) by the late 1970s. However, due to a series of issues related to timing, cost and the corporate culture at Xerox, the remarkable achievements at PARC—which Steve Jobs had seen during a visit to the lab in 1979 and inspired his subsequent development of the Macintosh computer in 1984—never saw the light of day as commercial products. As is often the case in the history of technology, one innovator may be the first to theorize about a breakthrough, or even build a prototype, but never fully develop it, while another innovator creates a practical and functioning product based on a similar concept and it becomes the wave of the future. This was certainly the case with desktop publishing, where many of the elements that Jobs would later integrate at the Cupertino demo in 1985—the graphical user interface (GUI), the laser printer, desktop software integrating graphics and text, what-you-see-is-what-you-get (WYSIWYG) printing—were functioning in experimental form at PARC at least six years earlier.

What became known as the desktop publishing revolution was just that. It was a transformative departure from the previous photomechanical stage of printing technology on a par with the separation of Gutenberg’s invention of mechanized metal printing type production from the handwork of scribes. Desktop publishing brought the era of phototypesetting that began in the 1950s to a close. It also eventually displaced the proprietary computerized prepress systems that had emerged and were associated with Scitex in the late 1970s. Furthermore, and just as significant, desktop publishing pushed the assembly of text information and graphic content beyond the limits of ink-on-paper and into the realm of electronic and digital media. Thus, desktop publishing accomplished several things simultaneously: (1) it accelerated the process of producing print media by integrating content creation—including the design of text and graphics into a single electronic document on a personal computer—with press manufacturing processes; (2) it expanded the democratization of print by enabling anyone with a personal computer and laser printer to produce printed material starting with a copy of one; (3) it created the basis for the mass personalization of print media and; (4) it laid the foundation for the expansion of a multiplicity of digital media forms within a decade, including electronic publishing in the form of the Portable Document Format (PDF), e-books, interactive media and, ultimately, contributed the global expansion and domination of the Internet and the World Wide Web.

Just as Gutenberg attempted to replicate in mechanized form the handwriting of the scriptoria, the initial transition to digital and electronic media by desktop publishing carried over the various formats of print, i.e., books, magazines, newspaper, journals, etc., into digital files stored on magnetic and optical storage systems such as computer floppy disks, hard drives and compact disks. However, the expansion of electronic media—which was no longer dimensionally restricted by page size or number of pages but limited by data storage capacity and the bandwidth of the processing and display systems—brought the phenomenon of hyperlinks and drove entirely new communications platforms for publishing text, graphics, photographs and audio and video, eventually on mobile wireless devices. Through websites, blogs, streaming content and social media, every individual can record and share their life story, can become a reporter and publisher or participate in, comment on and influence events anywhere in the world.

The groundbreaking significance of desktop publishing, which straddled both the previous printing and the new digital media ages, can be further illustrated by going through the above description by Will Durant of the impact Gutenberg’s invention and substituting the new media for printing and the other contemporary elements of social, intellectual and political life for those the historian identified in the 1950s about the fifteenth century:

To describe all the effects of desktop publishing and electronic media would be to chronicle well more than half the history of the modern mind. … It replaced all informational print by republishing it online as text or in more complex graphical formats like PDF, with methods for managing versions and protecting authenticity so that scholars and researchers in diverse countries may work with one another through video streaming and virtual reality tools, allowing entry of new information and data to be gathered, published and shared in real time as though they were sitting in the same room. … Online electronic media made available to the public all of the world’s manuals and procedural instructions; with the development of international collaborative projects such as Wikipedia, it became the greatest tool for learning that has ever existed, at no charge and available to all. It did not produce Modernism or the information age, but it further paved the way for a new stage of human society that had been promised by the American and French revolutions, based on democracy and where genuine equality exists as a fundamental right for everyone. It made the entire library of literature, music, fine and industrial arts, architecture, theater, athletic competition and cinema instantly available anywhere and at any time in the palm of the hand and prepared the people for an understanding of the role of mythology, mysticism and superstition in history by demonstrating the application of a scientific and materialist outlook in everyday life. It ended the monopoly of news by corporate and state publishers and the control of learning by educational institutions managed by the prevailing ruling classes. It encouraged the streaming of live video by anyone to the entire world’s audience of mobile device owners that could never have been reached through printed media. It facilitated global communication and cooperation of scientists and enabled the launching of the international space station and the sending of multiple probes to the surface of Mars and beyond. It affected the quality and character of all published literature and information by subjecting authors and journalists to the purse and taste of billions of regular working people in both the advanced and lesser developed countries rather than to just the middle and upper classes. And, after speech and print, desktop publishing, online and social media provided a readier instrument for the dissemination of nonsense and disinformation than the world has ever known.

Up to the present, the new media has not yet displaced print the way print eventually replaced the scribes. It is likely that printing on paper will continue to exist well into the future in a similar manner that the ancient art of pen-and-ink calligraphy has continued to exist alongside of print for centuries long after the last scriptorium was shut down. Meanwhile, electronic media such as e-books have contributed to a resurgence of printed books and, after the initial fascination with the electronic devices such as Amazon’s Kindle, the popularity and thirst of the public for books has increased, particularly following the onset of the coronavirus pandemic. While the forced separation of people from each other has driven up the use of digital tools such as online video meetings, events and gatherings, the self-isolation of reading a printed book has suddenly peaked again.

Considerable effort has been made to replicate the experience of reading print media in electronic form. The advent of e-paper—the simulation of the look and feel of ink-on-paper which was pioneered at Xerox PARC in the 1970s (Nick Sheridon, Gyricon)—is an attempt to adapt two-dimensional digital display technologies to mimic the reading experience of the printed page. Since then, studies have shown that paper-based books yield superior reading retention to that of e-books. This is not so much because of the appearance of the printed page and its impact on visual perception as it is the tactile experience and spatial awareness connected with turning physical pages and navigating through a volume that contains a table of contents and an index.

In 2012, during a presentation at the DRUPA International Printing and Paper Expo in Düsseldorf, Germany, Benny Landa, the pioneer of digital printing who developed the Indigo Press in 1993, said the following:

I bet there is not one person in this hall that believes that two hundred years from now man will communicate by smearing pigment onto crushed trees. The question on everyone’s mind is when will printed media be replaced by digital media. … It will take many decades before printed media is replaced by whatever it will be … many decades is way over the horizon for us and our children.

Since Landa’s talk at DRUPA was part of the introduction of a new press with a digital printing method called he called nanography, he was emphasizing that we need to live and work in the here and now and not get too far ahead of ourselves. Landa’s nanographic press is based on advanced imaging technology that transfers a film of ink pigment to almost any printing surface which is multiple magnitudes thinner than either offset or other digital presses. By removing the water in the inkjet process, the fusing of toner to paper in the xerography and the petroleum-based vehicles that carry pigment in traditional offset presses, nanography dramatically reduces the cost of reproduction by focusing on the transfer ultra-fine droplets of pure pigment (nanoink) first to a blanket and then to the substrate. The aim of nanography is to keep paper-based media economically viable by providing a variable imaging digital press that can compete with the costs of offset lithography and accommodate the needs of the hybrid digital and analog commercial printing marketplace.

While print volumes are in decline, society is not yet ready to make a full transition to electronic media and move entirely away from paper communications. This is a serious dilemma facing those working in the printing industry who are trying to navigate the difficulties of maintaining a viable business in an environment where print remains in demand—in some segments it is growing—but overall, it is a shrinking percentage of economic activity. With greater numbers of people and resources being redirected to communications and marketing products in the more promising and profitable big tech and social media sectors, the printing industry is being starved of talent and economic resources.

Rather than trying to put a date on the moment of transition to a post-printing and fully-digital age of communications, the more relevant question is how it will be accomplished. Landa had it right when he said that today most people believe that two hundred years from now, man will no longer communicate by “smearing pigment onto crushed trees.” When the character of print media is put in these terms, the historical distance of this analog form of communications from the long-term potential of the present digital age becomes clearer. Still, no clear vision or roadmap has yet been articulated for what is required for civilization to elevate itself beyond the age of print.

It is difficult to discuss the moment of a complete progression of human communication methods from Gutenberg to Jobs without reference to the work of the Canadian media theorist Marshall McLuhan. Although McLuhan’s presentation lacked a coherent perspective and tended to drift about in what he called the “mosaic approach,” he made numerous prescient observations about the forms of media and the evolution of communications technology. Sharing elements of the theory of disruptive continuity, McLuhan focused in on the reciprocal interaction of the modes of communication—spoken, printed and electronic—with the broader societal economic, cultural and ideological transformations in world history. He emphasized the way these transitions each fundamentally altered man’s consciousness and self-image. He also recognized that there was presently a “clash” between what he called the culture of the “electric age” with that of the age of print. During an interview with the British Broadcasting Corporation in 1965, McLuhan explained how he saw technology as an extension of man’s natural capabilities:

If the wheel is an extension of feet, and tools of hands and arms, then electromagnetism seems to be in its technological manifestations an extension of our nerves and becomes mainly an information system. It is above all a feedback or looped system. But the peculiarity, you see, after the age of the wheel, you suddenly encounter the age of the circuit. The wheel pushed to an extreme suddenly acquires opposite characteristics. This seems to happen with a good many technologies— that if they get pushed to a very distant point, they reverse their characteristics.

Among McLuhan’s most significant contributions are found in his 1962 work, The Gutenberg Galaxy: The Making of Typographical Man. He discusses the reliance of primitive oral culture upon auditory perception and the elevation of vision above hearing in the culture of print. He wrote his study, “is intended to trace the ways in which the forms of experience and of mental outlook and expression have been modified, first by the phonetic alphabet and then by printing.” For McLuhan, the transformations from spoken word culture to typography and from typography to the electronic age extended beyond the mental organization of experience. In the Preface to The Gutenberg Galaxy, McLuhan summarized how he saw the interactive relationship of media forms with the whole social environment:

Any technology tends to create a new human environment. Script and papyrus created the social environment we think of in connection with the empires if the ancient world. … Technological environments are not merely passive containers of people but are active processes that reshape people and other technologies alike. In our time the sudden shift from the mechanical technology of the wheel to the technology of electric circuitry represents one of the major shifts of all historical time. Printing from movable types created a quite unexpected new environment—it created the public. Manuscript technology did not have the intensity or power of extension necessary to create publics on a national scale. What we have called “nations” in recent centuries did not, and could not, precede the advent of Gutenberg technology any more than they can survive the advent of electric circuitry with its power of totally involving all people in all other people.

As early as 1962—seven years before the creation of the Internet and nearly three decades before the birth of the World Wide Web—McLuhan anticipated the historical, far-reaching and revolutionary implications of the information and electronic age on the global organization of society. Although he eschewed determinism in any form, McLuhan pointed to the potential for electronic media to drive mankind beyond the national particularism which is rooted in the technical, socio-historical and scientific eras connected with the age of print. McLuhan later used the phrase “global village” to describe his vision of a higher form of non-national organization driven by the methods of human interaction that were brought on by “the advent of electric circuitry” and “totally involve all people in all other people.” For McLuhan, the transformation from the typographic and mechanical age to the electric age began with the telegraph in the 1830s. The new media created by the properties of electricity were expanded considerably with telephone, radio, television and the computer in the nineteenth and twentieth centuries. McLuhan also wrote that the electronic media transformation revived oral culture and displaced the individualism and fragmentation of print culture with a “collective identity.”

McLuhan’s examination of the historical clash of the electronic media with the social environment of print culture and his prediction that a new collective human identity will be established from the transition to a global structure beyond the present fragmented national identities is highly significant. It points to the coming of the societal transformations that will be required for electronic media to thoroughly overcome print media as a completed historical process. In a similar way that Gutenberg’s invention spread across Europe and the world and planted the seeds of foundational transformation—in technology, politics and science—that developed over the next three and a half centuries, we are today likewise in the incubator of the new global transformation of electronic media. With this historically dynamic way of understanding the present, the worldwide spread of smartphones and social media to billions of people, despite national barriers placed upon the exchange of information as well as other differences such as language and ethnicity, humanity is being transformed with the emergence of a new homogeneous global culture. For this development to achieve its full potential, the social organization of man must be brought into alignment and there is no reason to believe that this adjustment from nations to a higher form of organization will take place with any less discontinuity than that of the period of world history that began with the rapid development of printing technology, the Enlightenment and the American and French Revolutions.

There are scientists and futurists who either proselytize or warn about the coming of the technological singularity, i.e., the moment in history when electronic media convergence and artificial intelligence will completely overtake the native capacities of humanity. The argument goes that these extensions of man will become irreversible, and civilization will be transformed in unanticipated ways either toward a utopian or dystopian future, depending on whether one supports or opposes the promises of the singularity. The twentieth century philosophical and intellectual movement known as transhumanism promotes the idea that the human condition will be dramatically improved through advanced technologies and cognitive enhancements. The dystopian opponents of transhumanist utopianism argue that technological advancements such as artificial intelligence should not be permitted to supplant the natural powers of the human mind on the grounds that they are morally compromising, and such a development poses an existential threat to society. Among these competing views, however, is the shared notion that the coming transformation of mankind will take place without a fundamental change in the social environment. Both the supporters and opponents of transhumanism envision that the extensions of man will evolve independently of any realignment of the economic or cultural foundations of society.

However, it is not possible to prognosticate about the future of communications technology outside of an understanding that the tendencies present in embryonic form nearly six centuries ago—particularly the democratization of information and knowledge that been vastly expanded in our time—bring with them powerful impulses for broad and fundamental societal change. In a world where every individual has the potential to communicate as both publisher and consumer of information with everyone else on the planet—regardless of geographic location, ethnicity, language or national origin—it appears entirely possible and necessary that new and higher forms of social organization must be achieved before this new media can carve a path to a truly post-printing age of mankind. While the existential threats are real, they do not come from the technology itself. The danger arises from the clash of the existing social structures against the expanding global integration of humanity. We have every reason to be optimistic about taking this next giant step into the future.

Concluded